When cars first appeared on American streets around 1903, the only safety feature was a horn. That was the extent of it. You could buy a two-ton machine capable of 45 miles an hour, and the manufacturer's answer to safety was: honk.

People started dying almost immediately. By 1913, the death rate was 33 per 10,000 vehicles, roughly 20 times what it is today. The response was what you'd expect. Speed limits. Driver's licenses. Traffic signals. Driver's education. For fifty years, everyone tried to fix the problem by making drivers more careful. It barely worked. Deaths kept climbing as more cars hit the road.

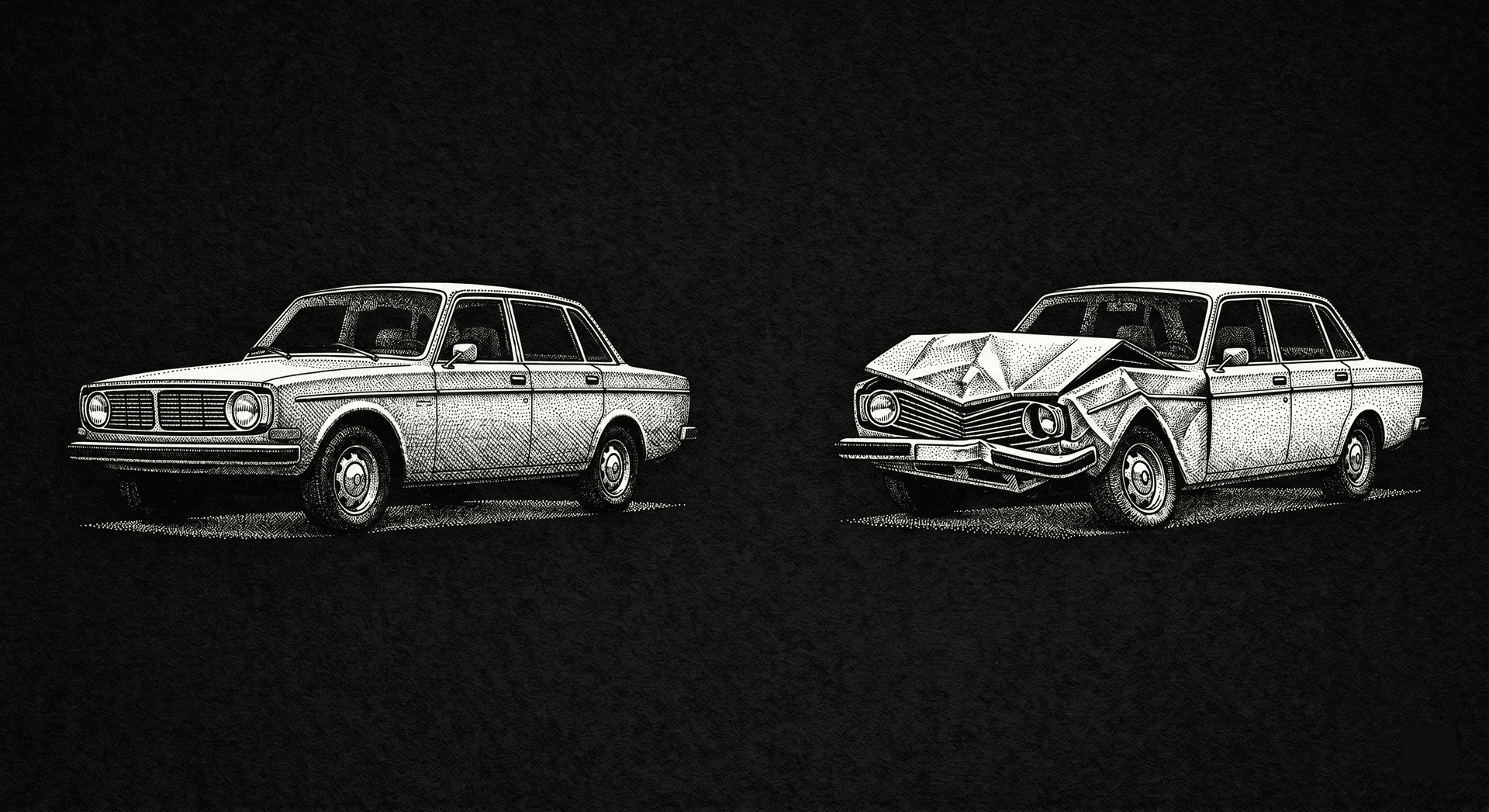

What actually fixed it was something nobody had tried: changing the car. Early cars were built rigid, to protect the vehicle. Then, in the 1950s, engineers started designing cars to crumple, to absorb energy and fail in a way that protected the person inside. Crumple zones. Three-point seatbelts. Later, airbags. Deaths per vehicle fell 95% over the next seventy years.

The insight was simple, but it took decades to see. You couldn't fix the problem by being more careful. Even if you tried, the demand for cars was just growing too fast. You had to change what happens when things go wrong.

I think about this whenever I read about a software breach.

—

In the last month there have been a lot of them. Trivy. LiteLLM. Axios, which for three hours was quietly shipping a remote access trojan to anyone who ran npm install. And this week, Vercel – with access keys, source code, and GitHub tokens offered for sale on a forum.

At first glance, these look like unrelated failures. Different companies, different stacks, different mistakes. They’re not. What matters isn’t how attackers broke in. It’s what they were able to do once they were inside.

Secrets. Tokens. Keys. Ready to be copied. Ready to be sent somewhere else. Now you’re left trying to answer questions you can’t reliably answer. Which secrets were taken. What they unlock. Whether they’re still being used. So you rotate everything. Slowly. Manually. Hoping you didn’t miss one. Hoping nothing breaks.

—

These breaches are only accelerating. There were 1 billion GitHub commits in 2025. Now, it's 275 million per week, on pace for 14 billion this year if growth remains linear (it won’t). Everyone is writing code now, but the number of reviewers hasn’t changed. Nobody reads 10,000 lines of AI-generated code the way they used to read 100 lines of their own. They skim it, approve it, and ship it.

This is fine until an AI commits a .env file, or hardcodes a token in a CI config, or pulls in a package that got hijacked yesterday. Then it isn't fine at all.

We've been here before. A new technology arrives. Demand explodes. The old safety practices, which worked fine at the old scale, stop working at the new one.

—

When something goes wrong in software, the instinct is to add process. A breach happens, and the response is a new review step. Another breach, another step. Eventually you have a 15-step approval process and engineers who spend more time filling out forms than writing code.

This is postmortem-driven development. You write the code. It breaks. You have a postmortem. You add a step. You write more code, faster this time. It breaks in a new way. Another step. The steps accumulate. The breaches continue. Nobody asks why.

The reason is the same as it was with cars. The steps are trying to make developers more careful. But carefulness doesn't scale to AI-generated code any more than it scaled to 17 million cars.

—

A static secret is a password that doesn't change. You create it once, and it sits there, waiting to be used. Or stolen. It doesn't care which.

Every process around static secrets is a bet that nobody will ever leak one. That's a bet you will lose. AI has made the math much worse, because AI writes code faster than anyone can review it, and sometimes it writes code with secrets in it.

When Axios was hijacked, attackers scanned every compromised machine for credentials. They found AWS keys, database passwords, API tokens, long-lived credentials that had been sitting on developer machines for months. Some for years. That's what turned a bad breach into a catastrophic one.

—

The answer isn’t more process. It’s to build secrets that fail safely.

This is the crumple zone insight, applied to credentials. Don’t try to prevent every leak, assume leaks will happen, and design so they don’t matter. Generate secrets on demand. Rotate them automatically. Scan your repos so leaked ones get caught before they ship. And move away from long-lived tokens entirely.

Better yet, eliminate secrets where you can. Use identity-based, tokenless authentication so there’s nothing static to copy in the first place.

None of this is new. What’s new is that we can’t afford not to do it anymore.

—

When an industry adds layers of process to solve a problem, the problem is usually in the foundation. Process is what you reach for when you can't or won't fix the underlying thing. Static secrets are a foundation we know how to change. We just haven't.

They were tolerable when code moved slowly and humans reviewed everything, but that doesn’t work with AI. At this scale, leaks aren’t edge cases. They’re the default.

The car industry spent fifty years trying to make drivers more careful before anyone thought to redesign the car. We've been trying to make developers more careful for about as long. AI has made it clear we're out of time.

The fix is to change the system so leaks don’t matter. That means short-lived, scoped, on-demand access. It means ephemeral secrets instead of static credentials. It means moving toward a world where copying a secret doesn’t give you power, because secrets don’t exist in the first place.

That’s the direction we’re building toward at Infisical.